By Xiao Ma, PhD Candidate, Information Science

In 2002, Blascovich et al. published a paper, Immersive Virtual Environment Technology as a Methodological Tool for Social Psychology, outlining a vision where Virtual Reality (VR) can address several long-standing methodological problems in social psychology, such as: non-representative samples of participants and lack of replication [2].

However, more than 15 years later, when we conducted a review of experiments conducted in VR, most participants were drawn from the college population and tend to be very WEIRD (Western, Educated, Industrialized, Rich and Democratic). Neither could any of the VR studies be easily replicated, due to scattered software and hardware differences, as well constraint of physical lab spaces.

Conducting Experiments Online, and in VR

There is tremendous value in being able to run large scale experiments online quickly. A/B testing, probably one of the best well known form of online experimentation, has transformed the web as we know it. In social sciences,conducting crowdsourced behavior experiments online has also been accepted as an important method. Key advantages include: (1) quick access to a diverse pool of subjects; (2) low cost; (3) faster theory/experiment cycle [3].

At the same time, social scientists have long been excited about the potential in VR technology when it comes to running experiments.

VR Experiments + Crowdsourcing

There is an urgent need to bring VR experiments truly online — participants should be able to join from anywhere around the world.

As researchers at Cornell Tech (see full team list in the end), we asked ourselves:

Can we use crowdsourcing techniques to run VR experiments on the web?

Such a mental experiment raises more questions:

- Can we reach enough people with VR devices in the wild?

- Are their demographics more diverse than the previous VR study participants (in terms of age, gender, education level, income, etc.)?

- What technologies are available to developers to deploy and run crowdsourced experiments?

- Can we develop VR experiments that run remotely and independently without an experimenter present?

We are happy to report that after a year of work, we can answer the questions above. What we learned is in an upcoming paper, Web-Based VR Experiments Powered by the Crowd, to be presented next week at The Web Conference 2018 (WWW) in Lyon, France (pre-print is available through arXiv.org.

In short, my research team designed a new recruitment method and created a panel of 242 VR-eligible crowdworkers through Amazon Mechanical Turk. We showed that this population is more diverse than the average college population in key demographic categories, and replicated (some successfully) three classic studies in VR (more details below).

We open sourced (GitHub repos coming online soon!) the experiment data log, as well as software (all in JavaScript) for easy replication and adaption for future studies.

Some Finding Highlights

Here we provide a few highlights in our findings, including VR devices distribution, demographics, and a brief summary of one of the experiments we replicated. For more details, please refer to the paper itself.

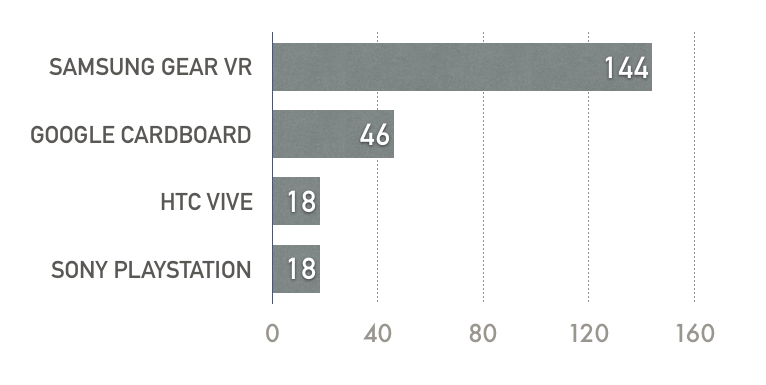

The Type of VR Devices

We recruited 242 VR-eligible workers through Amazon Mechanical Turk during a period of 13 days. Below is a breakdown of the type VR devices owned by crowdworkers.

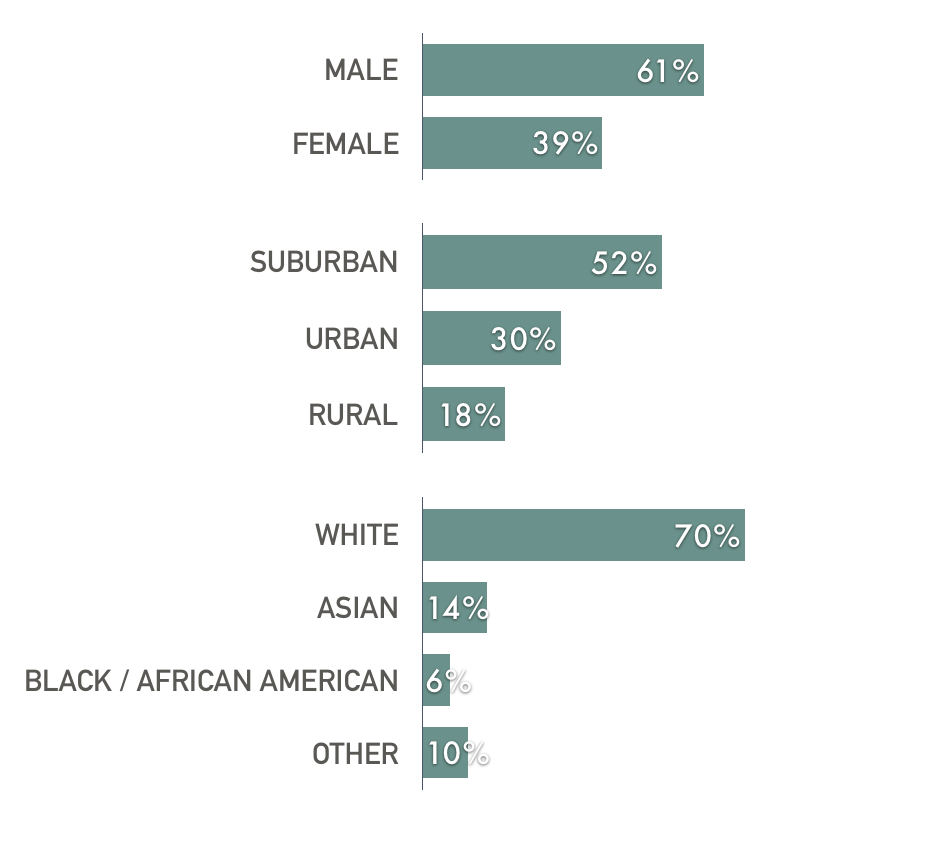

Demographics of VR-eligible Panel

We also surveyed the panel for their demographic information. We show that this panel is more diverse compared to the typical college participant population in terms of age, educational level, residential area, and household income. For example, the panel reported ages ranging between 18–78 (median 32); education level from some college (30%), bachelor’s (30%), to advanced degrees. Majority (56%) reported income level between $30k — $80k (for reference, the median US household income in 2016 was $56k).

Replicating Milgram in VR Online

One of the studies we replicated in VR was the Milgram et al.’s study on the drawing power of crowds [1].

In 1969, Milgram et al. conducted a study on the streets of New York, showing that crowd can influence people’s behavior and draw people into it [1]. In the study, the researchers hired actors as the stimulus crowd to look up to the sky, and recorded the number of passers-by who followed the crowd’s gaze.

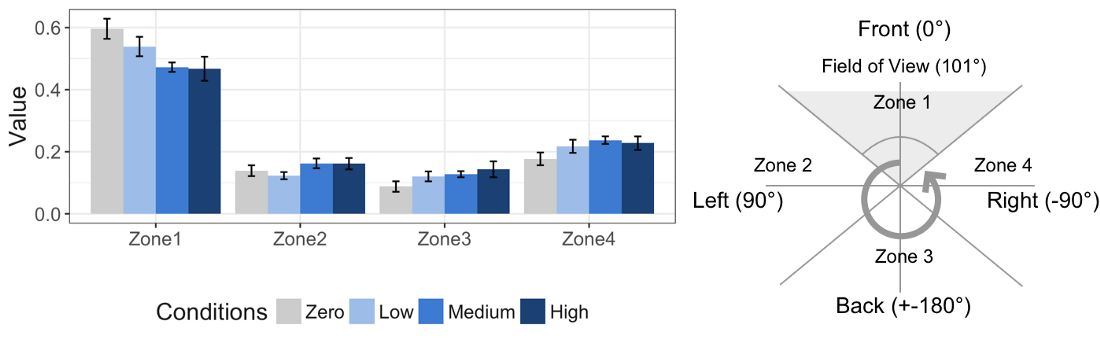

We replicated the study in VR, by giving the participant a random object finding task, while placing ten static avatars in the environment as the stimulus crowd, facing different directions in VR. In the background, we log each participant’s head orientation as an approximation of their gaze direction five times per second.

We launched the study to Amazon Mechanical Turk and the study opened for seven days. We received 56 valid and unique submissions in total, and paid $5 per submission.

What we found was quite interesting — when more avatars face the back, participants spent more time exploring areas in 360 that is outside their default field of view — i.e., being drawn to gaze the direction of the avatars.

More concretely, the experiment had four conditions, Zero, Low, Medium and High — each indicating the number of avatars facing to the back of the participant (to Zone 3). The graph above shows as conditions change from Zero to High, each participant’s gaze lie more outside of Zone 1, and explore more of Zone 3 — following the stimulus crowd’s gaze.

Such observation has important implications for avatar designs and social VR applications — and having the ability to run these studies fast and cheaply on VR can greatly speed up the design and research cycle for the nascent social VR space.

Final Words

Despite our success in running the first crowdsourced experiments in VR, challenges remain. For the three experiments we replicated, we were only able to reproduce the results of Milgram et al. [1] fully. The first experiment on the restorative effect of natural and urban environments was partially reproduced. And we failed to reproduce the result from the original Proteus effect paper [4] in our second experiment.

In addition, the experiments we ran (roughly 60 participants per experiment) were still limited in scale, and it is hard to replicate these studies without quickly exhausting the eligible participant pool.

However, with the launch of more affordable, stand-alone devices, such as Oculus Go, as well as improvements and price-cut from other companies, it is reasonable to expect that people with access to VR devices from their own home will continue to grow — hinting on a future of large-scale VR experimentation online. We hope our work has set a good foundation for achieving such vision. And remember, data and software isopen sourced for your easy replication and adaption.

References

[1] Milgram, S., Bickman, L., & Berkowitz, L. (1969). Note on the drawing power of crowds of different size. Journal of personality and social psychology, 13(2), 79.

[2] Blascovich, J., Loomis, J., Beall, A. C., Swinth, K. R., Hoyt, C. L., & Bailenson, J. N. (2002). Immersive virtual environment technology as a methodological tool for social psychology. Psychological Inquiry, 13(2), 103–124.

[3] Mason, W., & Suri, S. (2012). Conducting behavioral research on Amazon’s Mechanical Turk. Behavior research methods, 44(1), 1–23.

[4] Yee, N., & Bailenson, J. (2007). The Proteus effect: The effect of transformed self-representation on behavior. Human communication research, 33(3), 271–290.

Research Team

Xiao Ma,Megan Cackett,Leslie Park,Eric Chien,Mor Naaman

Web-Based VR Experiments Powered by the Crowd,The Web Conference 2018

Media Highlights

Tech Policy Press

Content Moderation, Encryption, and the LawRELATED STORIES